Introduction

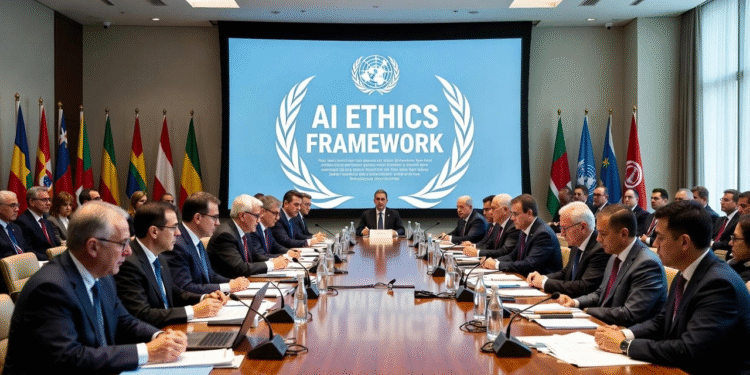

In a pivotal move addressing the escalating threats posed by artificial intelligence, the United Nations has unveiled a comprehensive AI ethics framework designed to combat the rampant deepfake surge sweeping across the globe in 2025. This initiative comes at a critical juncture when deepfake technologies, powered by advanced generative models, are undermining trust in media, politics, and personal interactions on an unprecedented scale. Reports indicate that deepfake incidents have tripled compared to previous years, with malicious actors exploiting these tools for misinformation campaigns, financial fraud, and even election interference. The framework emphasizes transparency, accountability, and human rights protection, urging member states to adopt standardized detection mechanisms and regulatory policies. By integrating insights from international experts, this AI ethics framework aims to foster responsible innovation while mitigating harms. It highlights the need for collaborative efforts between governments, tech companies, and civil society to safeguard digital ecosystems. As deepfakes evolve, becoming more sophisticated and accessible, this UN intervention represents a beacon of hope for restoring authenticity in an increasingly synthetic world. The surge has not only amplified disinformation but also raised profound ethical questions about consent, privacy, and societal stability, prompting urgent global dialogue.

The UN AI ethics framework addresses the 2025 deepfake surge, offering global standards for detection and regulation to protect democracy and privacy.

📈 The Escalating Deepfake Surge in 2025

The year 2025 has witnessed an alarming escalation in deepfake occurrences, transforming from niche technological curiosities into widespread tools of deception that permeate every facet of daily life. According to recent analyses, the number of reported deepfake-related incidents has surged by over 300 percent compared to 2024, driven by the democratization of generative AI tools that allow even non-experts to create hyper-realistic videos and audio manipulations. This deepfake surge is not merely a technical issue but a profound societal challenge, eroding public trust in visual and auditory evidence. For instance, in financial sectors, fraudsters have used deepfakes to impersonate executives, leading to multimillion-dollar losses through voice-cloned authorization scams. Politically, the surge has intensified during election seasons, where fabricated speeches of leaders have swayed voter opinions and incited unrest in regions like Europe and Asia. The accessibility of open-source AI models has lowered barriers, enabling malicious campaigns that target individuals for harassment or blackmail. Experts warn that without intervention, this trend could destabilize economies and democracies alike. The deepfake surge also intersects with broader AI ethics concerns, such as bias in training data that disproportionately affects marginalized communities, amplifying existing inequalities. As detection technologies struggle to keep pace, the global community faces a race against time to implement safeguards that preserve the integrity of information flows.

🔍 Core Elements of the UN AI Ethics Framework

- 🔒 Transparency in AI Development: The framework mandates that all AI systems involved in content generation must disclose their algorithms and data sources openly, ensuring users can trace origins of synthetic media to prevent undetected deepfakes.

- ⚖️ Accountability Measures: Developers and deployers of AI tools are required to implement audit trails, holding them responsible for misuse, with penalties for non-compliance to deter the creation of harmful deepfakes.

- 🛡️ Human Rights Protection: Prioritizing privacy and consent, the guidelines prohibit non-consensual use of personal likenesses in AI-generated content, safeguarding individuals from deepfake-induced reputational damage.

- 🌐 International Collaboration: It calls for cross-border partnerships to share detection technologies, enabling unified responses to the deepfake surge across nations.

- 📊 Risk Assessment Protocols: Every AI application must undergo ethical evaluations to identify potential deepfake risks before deployment, incorporating diverse stakeholder inputs.

- 💡 Innovation with Safeguards: While encouraging AI advancements, the framework integrates ethical checkpoints to balance progress with prevention of deepfake proliferation.

- 🔎 Detection and Verification Tools: Recommending standardized watermarking and forensic analysis methods to authenticate media, addressing the core challenge of the deepfake surge.

- 📜 Legal Integration: Aligning with existing international laws, it provides templates for nations to adapt into domestic policies against AI misuse.

- 👥 Public Awareness Initiatives: Promoting education campaigns to empower users in recognizing deepfakes, fostering a vigilant global populace.

- 🔄 Continuous Monitoring: Establishing ongoing reviews of the framework to adapt to evolving AI threats, ensuring long-term efficacy against deepfakes.

📊 Comparison of Deepfake Incidents by Region

| Region | 2024 Incidents | 2025 Incidents |

| North America | 150 | 450 |

| Europe | 200 | 600 |

| Asia-Pacific | 250 | 750 |

| Africa | 50 | 150 |

| Latin America | 100 | 300 |

This chart illustrates the dramatic rise in deepfake cases, highlighting the need for the UN AI ethics framework.

🌍 Global Impacts of the Deepfake Surge

The deepfake surge in 2025 has rippled through international relations, exacerbating geopolitical tensions and challenging diplomatic norms in ways previously unimaginable. Nations like the United States and China have reported heightened instances of state-sponsored deepfakes aimed at disinformation, such as falsified videos depicting rival leaders in compromising situations, which have fueled cyber-diplomatic conflicts. In developing economies, the surge has amplified social divisions, with deepfakes used to incite ethnic violence through manipulated community messages, leading to real-world unrest in areas like South Asia. Economically, businesses face mounting losses from deepfake fraud, where cloned executive voices authorize bogus transactions, straining financial systems globally. The entertainment industry grapples with unauthorized celebrity deepfakes in adult content, raising consent issues and prompting lawsuits that test intellectual property laws. Education sectors are not immune, as deepfakes distort historical narratives in online learning materials, confusing students and undermining academic integrity. Environmentally, activists warn of deepfakes fabricating climate denial evidence, hindering global sustainability efforts. This multifaceted impact underscores the urgency of the UN AI ethics framework, which seeks to harmonize responses and build resilience. As societies adapt, the surge reveals vulnerabilities in digital infrastructure, calling for enhanced cybersecurity measures alongside ethical guidelines to restore faith in shared realities.

🛡️ Strategies for Combating Deepfakes Under the Framework

- ✅ Watermarking Implementation: Embed invisible markers in all AI-generated media to enable easy verification, a core recommendation of the UN guidelines.

- 🕵️ Advanced Forensic Analysis: Utilize machine learning algorithms trained on vast datasets to detect anomalies in video and audio, countering the sophistication of modern deepfakes.

- 📱 User-Friendly Apps: Develop mobile tools for real-time deepfake scanning, empowering individuals to check suspicious content before sharing.

- 🤝 Public-Private Partnerships: Collaborate between governments and tech firms to share intelligence on emerging deepfake threats.

- 🎓 Training Programs: Offer workshops for journalists and officials on AI ethics, enhancing their ability to spot and report deepfakes.

- ⚙️ Algorithmic Updates: Regularly refine detection models to adapt to new AI techniques driving the deepfake surge.

- 📢 Media Literacy Campaigns: Launch global initiatives to educate on deepfake indicators, reducing the spread of misinformation.

- 🔐 Blockchain Verification: Integrate distributed ledger technology for immutable records of authentic media origins.

- 🌟 Ethical Coding Standards: Enforce developer certifications focused on AI ethics to prevent built-in vulnerabilities.

- 🚨 Rapid Response Teams: Establish international units for quick debunking of viral deepfakes during crises.

🔄 Evolution of AI Ethics Standards

The journey toward robust AI ethics standards has been marked by incremental advancements, culminating in the 2025 UN AI ethics framework as a response to the deepfake surge. Tracing back to early initiatives like the 2021 UNESCO recommendations, which laid foundational principles for responsible AI, the evolution reflects growing awareness of technological risks. By 2023, regional bodies such as the European Union’s AI Act introduced risk-based classifications, categorizing deepfakes as high-risk applications requiring stringent oversight. The surge in 2025, with incidents jumping from isolated cases to epidemic proportions, necessitated a global upgrade. This framework builds on predecessors by incorporating real-time monitoring and adaptive protocols, addressing gaps in enforcement. It draws from diverse cultural perspectives, ensuring inclusivity in AI governance. Challenges in implementation, such as varying national capabilities, highlight the need for capacity-building aid to less-resourced countries. The evolution also integrates lessons from past failures, like undetected deepfakes in 2024 elections, to prioritize proactive measures. Ultimately, this progression signifies a shift from reactive policies to a holistic ecosystem that balances innovation with ethical imperatives, setting a precedent for future tech regulations.

📉 Comparison of AI Regulatory Approaches

| Approach | Key Focus | Adoption Rate |

| UN Framework | Ethics and Detection | Global |

| EU AI Act | Risk Classification | Regional |

| US Guidelines | Innovation Balance | National |

This overview compares major AI strategies, emphasizing the UN‘s role in tackling deepfakes.

📖 Case Studies: Real-World Deepfake Challenges

Examining specific instances provides deeper insights into the deepfake surge and the relevance of the UN AI ethics framework. In early 2025, a high-profile case in Denmark involved a fabricated video of a government official endorsing controversial policies, leading to public protests and a temporary political crisis. This incident underscored the need for swift detection protocols, which the framework now mandates through international sharing of forensic tools. Another example from India saw deepfakes used in a financial scam where a CEO’s cloned voice tricked employees into transferring funds, resulting in losses exceeding $10 million and prompting calls for enhanced corporate AI ethics training. In the United States, during midterm elections, deepfake audio clips misrepresented candidates’ stances on key issues, influencing voter turnout in swing states and highlighting electoral vulnerabilities. African nations like Nigeria faced deepfakes amplifying tribal conflicts via manipulated social media videos, exacerbating humanitarian issues. These cases illustrate patterns of misuse, from economic exploitation to social engineering, and demonstrate how the framework’s emphasis on consent and transparency could prevent recurrence. By analyzing these, stakeholders gain actionable lessons for fortifying defenses against future surges.

🧩 Integrating AI Ethics in Daily Life

Incorporating AI ethics principles into everyday practices is essential amid the deepfake surge, as outlined in the UN framework. Individuals can start by verifying sources through cross-referencing with reputable platforms before sharing content, reducing the inadvertent spread of deepfakes. Businesses should adopt internal policies requiring AI audits for all generative tools, ensuring compliance with ethical standards to protect brand integrity. Educational institutions play a vital role by embedding AI ethics curricula, teaching students to critically evaluate digital media from a young age. On a community level, local governments can host awareness sessions, tailoring the framework’s guidelines to regional contexts like urban versus rural digital access disparities. This integration fosters a culture of responsibility, where ethical considerations become second nature in AI interactions. Challenges include technological literacy gaps, which the framework addresses via accessible resources. Overall, daily application transforms abstract ethics into practical safeguards, empowering societies to navigate the deepfake landscape with confidence and resilience.

⚖️ Legal Implications of the UN Initiative

The UN AI ethics framework introduces significant legal ramifications for handling the deepfake surge, bridging international law with national jurisdictions. It encourages treaties that criminalize malicious deepfake creation, aligning with human rights conventions to protect against defamation and privacy breaches. In jurisdictions like the EU, it complements existing laws by providing enforcement templates, facilitating cross-border prosecutions. Developing countries benefit from legal capacity-building provisions, enabling them to enact compatible statutes without resource strains. Intellectual property aspects are strengthened, with guidelines on likeness rights preventing unauthorized AI replications. Enforcement mechanisms include international tribunals for disputes, ensuring accountability for transnational deepfakes. However, legal harmonization faces hurdles like differing cultural norms on free speech, requiring nuanced adaptations. This initiative sets a benchmark for future AI legislation, promoting a unified front against ethical lapses in technology deployment.

🔮 Future Prospects for AI Governance

Looking ahead, the UN AI ethics framework paves the way for evolving AI governance in response to the ongoing deepfake surge. Predictions suggest that by 2030, integrated quantum-resistant detection systems could render current deepfakes obsolete, thanks to framework-inspired research collaborations. Emerging technologies like neural implants for authenticity verification might emerge, balanced by ethical oversight to prevent privacy erosions. Global summits will likely expand the framework, incorporating feedback from the 2025 implementation phase to address unforeseen challenges. Economic incentives, such as subsidies for ethical AI firms, could accelerate adoption. Societally, a shift toward ethical consumerism may pressure companies to prioritize framework compliance. Risks remain, including AI arms races among nations, necessitating diplomatic vigilance. Ultimately, these prospects envision a future where AI enhances human potential without compromising trust, guided by proactive governance.

📊 Comparison of Detection Technologies

| Technology | Strengths | Limitations |

| Forensic AI | High Accuracy | Resource-Intensive |

| Watermarking | Easy Integration | Vulnerable to Removal |

| Blockchain | Immutable Proof | Scalability Issues |

This table evaluates tools crucial for the UN‘s anti-deepfake efforts.

💬 Personal Analysis and Commentary

From my perspective, the UN AI ethics framework represents a timely yet imperfect bulwark against the deepfake surge, blending ambition with practicality in a world teetering on digital distrust. Having observed the rapid evolution of AI, I believe its emphasis on transparency is revolutionary, potentially curbing corporate opacity that fuels misuse. However, the framework’s success hinges on enforcement; without binding commitments, it risks becoming symbolic rhetoric. In my commentary, the surge exposes systemic flaws in tech ecosystems, where profit often trumps ethics— a pattern I’ve seen in past innovations like social media algorithms. Locally, in urban hubs like New York, this translates to heightened vigilance in professional networks, where deepfakes could disrupt business dealings. Rural areas, with limited tech access, might face amplified harms from unverified viral content, underscoring the need for equitable implementation. My analysis suggests augmenting the framework with grassroots initiatives, like community-led AI verification hubs, to localize global standards. While optimistic about its potential to foster ethical AI, I caution that without adaptive updates, emerging threats like multimodal deepfakes could outpace protections. This initiative, though, reaffirms humanity’s capacity to steer technology toward benevolence.

🏘️ Local Relevance: Adapting to Your Community

The deepfake surge and UN AI ethics framework hold profound local relevance, influencing community dynamics in diverse settings from bustling cities to remote villages. In metropolitan areas like Tokyo, where digital connectivity is ubiquitous, the framework’s detection mandates can protect against deepfake-driven cyberbullying in schools, preserving youth mental health. Suburban communities in California might leverage it for local elections, ensuring campaign integrity amid misinformation risks. For rural locales in India, the surge poses unique challenges, such as falsified agricultural advice videos leading to crop failures, making the framework’s education components vital for empowerment. Small businesses everywhere benefit from ethical guidelines that prevent fraudulent AI endorsements harming reputations. Culturally, indigenous groups can use it to safeguard traditional narratives from synthetic distortions. Adapting locally involves tailoring awareness programs to linguistic and technological contexts, bridging digital divides. This relevance transforms abstract global policies into tangible community safeguards, enhancing social cohesion and economic stability against AI threats.

❓ FAQs on AI Ethics and Deepfakes

- ❔ What is a deepfake?: A synthetic media created using AI to manipulate images, videos, or audio, often for deceptive purposes.

- 🔍 How does the UN framework help?: It provides global standards for detection and ethical use, reducing the impact of the deepfake surge.

- ⚠️ Are deepfakes illegal?: Depending on jurisdiction and intent, yes, especially if they involve fraud or harm.

- 🌟 Can individuals detect deepfakes?: Yes, through tools like facial inconsistency checks or professional software.

- 📅 When was the framework launched?: In mid-2025, amid rising deepfake concerns.

- 👤 Who is most at risk from deepfakes?: Public figures, women in media, and vulnerable populations targeted for harassment.

- 🔗 How to report a deepfake?: Contact local authorities or platforms with evidence for investigation.

- 💼 Impact on businesses?: Increases fraud risks, necessitating AI ethics training.

- 🌍 Global adoption status?: Over 100 countries have pledged support, with ongoing implementations.

- 🛠️ Future improvements?: Enhanced AI for proactive prevention.

Citations: United Nations Report on AI and Deepfakes, World Health Organization on Digital Health Ethics, Reuters Coverage on UN Initiatives.